Transparent When You Want It, Private When You Need It

Proven at institutional scale, the Stellar network has processed over 21.5 billion operations since launch across 10 million+ active accounts. Quarterly RWA payment volume reached $5.4 billion.

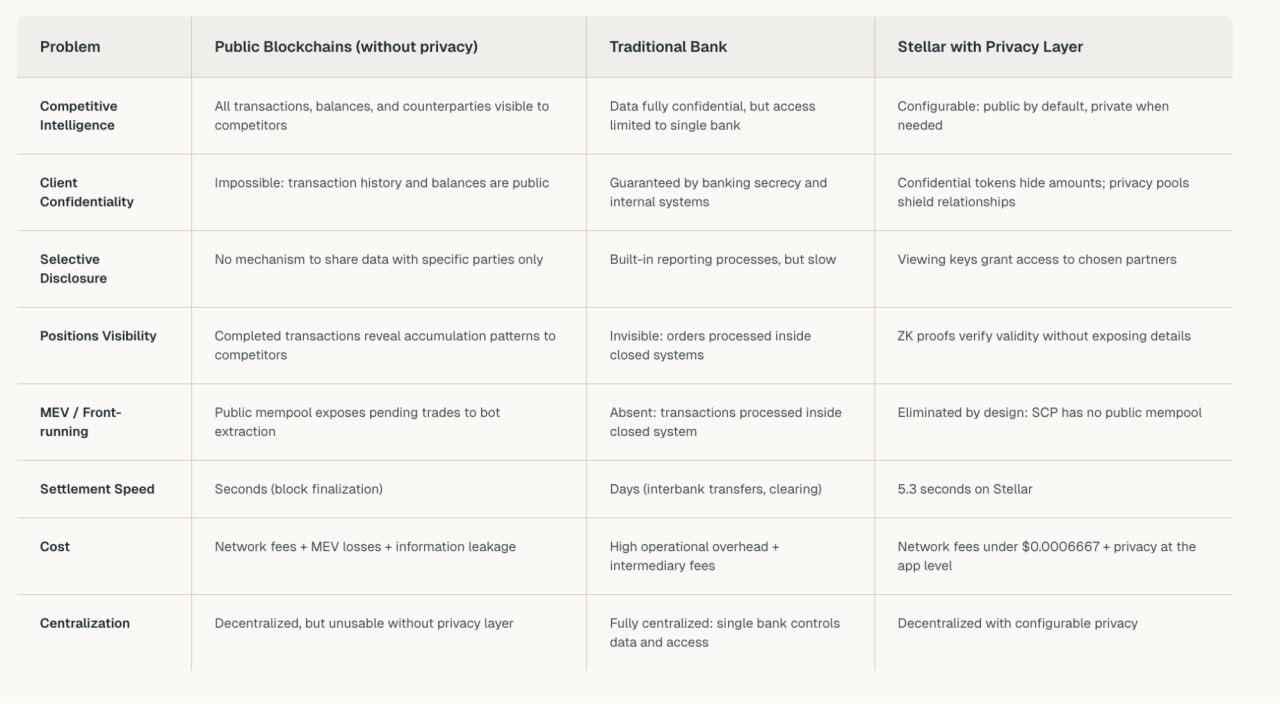

Settlement takes 5.3 seconds, a typical transaction costs $0.0006, and uptime has held at 99.99% over a decade. The kind of reliability that attracted Visa, PayPal, Franklin Templeton and MoneyGram to build on the network.

Fast. Cost-efficient. Dependable. The payment infrastructure works, but without privacy, institutions can't use it for their most sensitive operations.

By bringing privacy as a configurable layer, Stellar gives institutions and developers what they need for the next wave of adoption: The ability to choose between transparency and privacy when the situation demands it.

However, the industry recognized this gap years ago. Three architectural approaches emerged and each one hit a wall.

The visibility problem

Public ledgers create problems for institutions.

Problems that cost: Money, clients, and competitive advantage.

Here's how visibility becomes a liability.

-

Positions exposure. An institution accumulates a position over several days. Each purchase is visible on-chain in real time. Competitors track the accumulation pattern and trade against it. By the time the institution finishes buying, the market has already priced in the information through simple observation of public data.

-

Counterparty exposure. A client requests privacy for an OTC block trade. Even if the desk batches the order across smaller transactions, the counterparty relationship remains visible on-chain. Who trades with whom - that's the information competitors exploit: front-running, poaching counterparties, reverse-engineering strategies.

-

Selective disclosure. An institution needs to share specific transaction data with a partner while keeping it hidden from the market. That granularity doesn't exist on a public blockchain. Either everyone sees it, or no one does. Without an additional layer, selective visibility remains impossible.

As James Bachini has pointed out on the Stellar developer blog, pseudonymity serves as a thin alias rather than true confidentiality, a temporary mask that slips the moment someone looks closely enough.

In traditional finance, privacy is a given, not an option. Salaries are hidden. Competitors don't know who you work with. Partners only see their part of the deal. Closed systems guarantee this by default, but that guarantee comes at a cost: slow settlements, expensive intermediaries, and centralized control.

On paper, it should be pretty simple. Public blockchain solves this, right? It removes intermediaries, speeds up settlements. But with openness comes exposure. You leave yourself vulnerable.

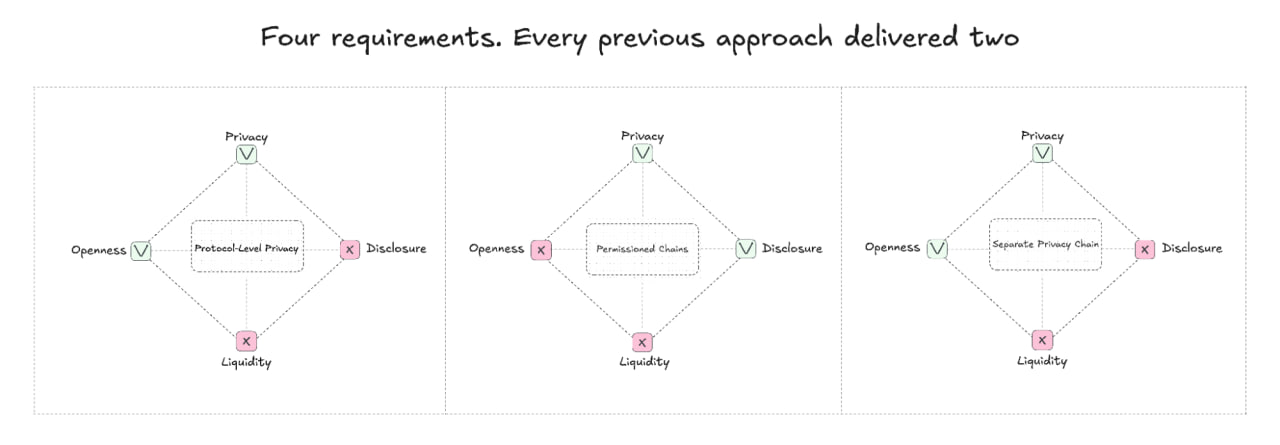

Multiple teams started tackling this problem. Each with a distinct architectural philosophy. However, every approach trapped institutions in a tradeoff they couldn't accept.

Three approaches, three failures

Approach 1: Privacy at Protocol Level

Privacy-first chains built privacy into the foundation. The result: all-or-nothing. Every transaction is hidden, no selective visibility, no configurability. Institutions don't need a black box. They need a dial. Protocol-level privacy gives them neither.

Counterparty screening also breaks down, an institution can not detect a transfer to a sanctioned address until it has been made. Assets with an opaque history automatically receive high-risk status, trigger enhanced verification, and inflate operating costs by 3-5 times. The result is predictable. Institutional players simply abandon such assets. The privacy that was supposed to attract them becomes the main reason for their departure.

Approach 2: Permissioned Blockchains

A group of banks is launching a private network: Controlled access, approved validators, reliability on the surface. But centralized management recreates the very limitations that blockchain was supposed to eliminate. One bank controls the validators and can unilaterally change fees, rules, and management parameters. This is not a blockchain, but a database with unnecessary steps.

The consortium increases the risk: In a network of five banks, three can rewrite the protocol, censor transactions, and cancel finality. Even without collusion, liquidity is fragmented where each bank launches its own network and creates isolated islands without network effects. Stagnation is imposed from above. Banks block useful updates if they threaten existing revenue streams.

Approach 3: Separate Privacy Chain

A dedicated L2 with native privacy on top of Ethereum is architecturally ambitious. On the other hand the operational load is prohibitive.

Two systems at once: Ethereum mechanics plus L2 specifics. Double the complexity, double the audit surface, and double the operational costs. Liquidity is spread between the Ethereum mainnet and other L2s.

Institutions require deep markets, and such networks offer less: Fewer users, thinner volumes, and fewer market makers. Each bridge transfer adds friction, delay, and additional costs. The result is fragmented liquidity. Not a single deep market.

Institutions need four things at once. Privacy from competitors, configurable compliance tools, deep liquidity, and openness. Previous approaches delivered two out of four at best. That's not a tradeoff institutions can accept. Stellar takes a different path - make all four available, and let institutions and developers choose what they need for each use case. Now, the question is how?

Infrastructure for Custom Privacy Solutions

Ready-made privacy tools: Confidential tokens and privacy pools cover common use cases. However every institution carries unique requirements. A bank's payroll contract differs from an OTC desk's trading system, which differs from a healthcare provider's payment flow. The conclusion is simple. Off-the-shelf solutions don't always fit.

Stellar provides the building blocks to construct custom privacy applications. X-Ray (Protocol 25), live on Mainnet since January 2026 introduced native support for zero-knowledge cryptography. This solves the developers' pain. They build with ease. Using industry-standard tooling on a network that already handles institutional-scale payments. Since we understand why this is important, we need to understand how it actually works.

The Building Blocks

1) BN254: the shared language of zero-knowledge cryptography. An elliptic curve with a mathematical structure that underpins how ZK proofs are created and verified. Most widely adopted in the industry. Ethereum relies on it. Major privacy projects rely on it.

X-ray didn't just add BN254 support. It embedded it as a native primitive. The distinction matters. On most chains, developers import external libraries to handle ZK math. Every library call burns extra gas, adds verification overhead, and introduces a potential attack surface. Stellar runs BN254 operations at the protocol level, which is convenient. Why? Faster verification, lower cost, and fewer security assumptions. You get a built-in engine versus a bolt-on attachment.

As a result, developers working with Ethereum-based ZK tools can extend their projects to Stellar without rewriting the core math. A second curve, BLS12-381, is also supported. These two options give developers flexibility to select what fits their use case.

2) Poseidon and Poseidon2: hashing built for ZK: Standard hash functions like SHA-256 perform well for general cryptography. Inside zero-knowledge proofs, however, they're sluggish and expensive. Designed specifically for ZK, Poseidon uses math operations that align naturally with how proofs are computed. 10 to 100x more efficient than SHA-256 in this context.

Also the same principle as BN254: Natively supported and not imported. Developers avoid costly on-chain reimplementation, maintain consistent hash logic on-chain and off-chain, and get predictable gas costs regardless of proof complexity.

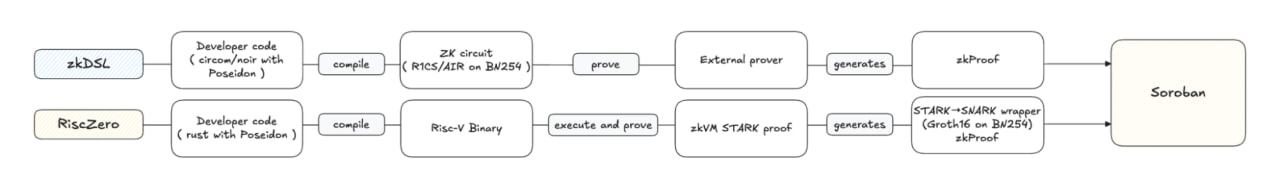

Two paths to ZK on Stellar

Let's explore two approaches. Both produce proof that Stellar verifies on-chain. The choice depends on how much control you need.

Path 1: zkDSL (Circom / Noir). Maximum control. Privacy logic goes into a specialized language, defining the exact mathematical rules the proof must satisfy. Compiled into a ZK circuit, the code generates a proof through an external tool. That proof is then sent to a smart contract on Stellar for verification. This allows precise control over proof design: custom privacy logic, optimized proof sizes, as well as specific security tradeoffs. This path suits developers who demand that level of granularity or want an efficient path for a known, narrow use case.

Path 2: RISC Zero. Familiar tools and automatic proofs. A regular program in Rust or C++ compiles into a format that a ZK virtual machine can execute. The machine runs the program and automatically generates a proof that the computation was done correctly without exposing the inputs. No deep ZK expertise required, making things simple. Write the logic in a language you already know. The proving system handles the cryptography. A RISC Zero verifier is already live on Stellar, so developers can verify zkVM-proven computations directly in a Stellar smart contract.

In contrast, deploying a ZK application on Ethereum + L2 means navigating two execution environments, managing bridging between layers, and auditing two separate security models. This can be quite difficult. Stellar simplifies this.

Both paths deploy directly on smart contracts: One chain, one execution environment, one security model with full access to the network's existing: liquidity, assets, and ecosystem. Privacy applications aren't isolated. They run on the same infrastructure as every other Stellar app.

Both paths converge on the same destination: A compact proof on the BN254 curve, verified through native operations. Validators check the proof mathematically - they don't know what's inside, only that it's valid.

What does this mean for developers and institutions?

The base layer stays unchanged for every new privacy feature. No validator updates. New privacy applications deploy as smart contracts on Stellar, also the same infrastructure every other Stellar app uses. Privacy evolves at the speed of application development, not protocol upgrades.

Encrypted payroll? Build it with Stellar smart contracts. Shielded block trades - build it with Stellar smart contracts. Private patient payments - build it with Stellar smart contracts. Each use case gets its own privacy logic, its own access rules, and its own disclosure settings. Shared infrastructure and custom applications. With these primitives in place, we have two production-ready models that show what developers can build.

What you can build: two privacy models in practice

On this infrastructure, two privacy models become possible. The first hides amounts but keeps participants visible. The second shields everything - identities, transactions, amounts - inside a verifiable pool. Stellar smart contracts power both while using the same ZK primitives described above.

Although not every use case needs the same level of privacy. Confidential tokens typically rely on zkDSL circuits - precise, efficient, and optimized for a known scope. Privacy pools work well with zkDSL too (Nethermind's implementation demonstrates this), though more complex DeFi or gaming applications are a better fit for zkVM. The choice depends on complexity: narrow, well-defined problems lean toward zkDSL; broader computations lean toward RISC Zero.

Confidential Tokens: When Only Amounts Need Hiding

Not every transaction needs full privacy. In payroll, the relationship is known. Everyone assumes you get paid by the company you work for. What matters is hiding the amount. Confidential tokens protect exactly what needs to be hidden, nothing more. An efficient path to privacy where full shielding could be overkill.

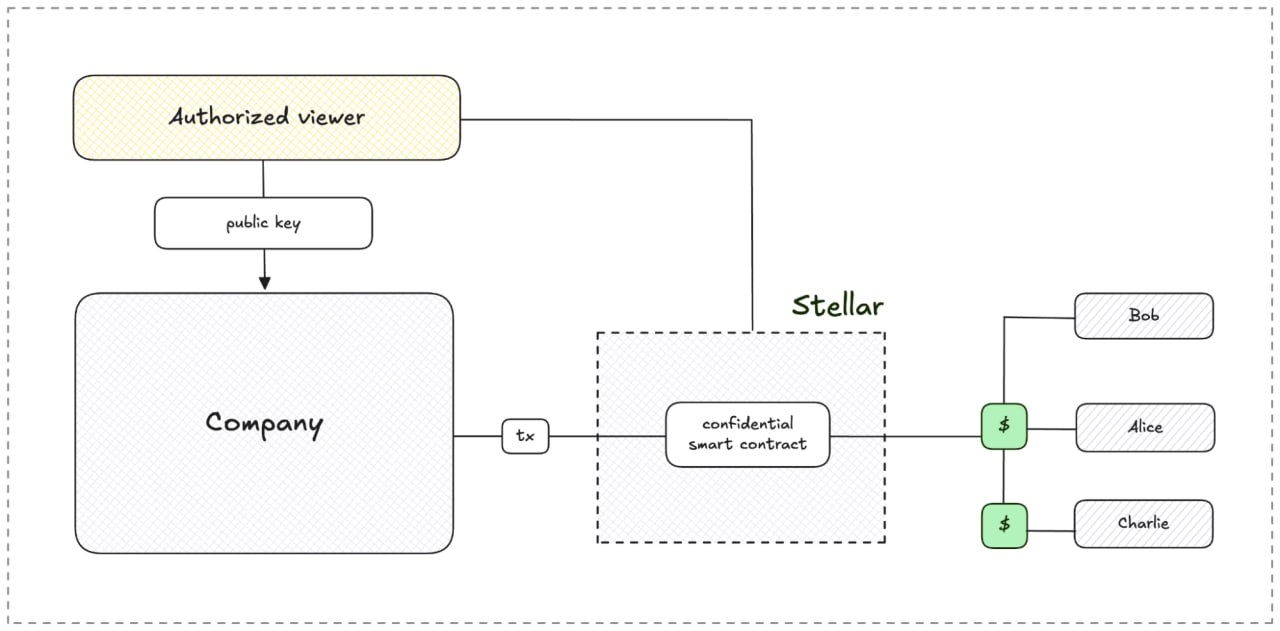

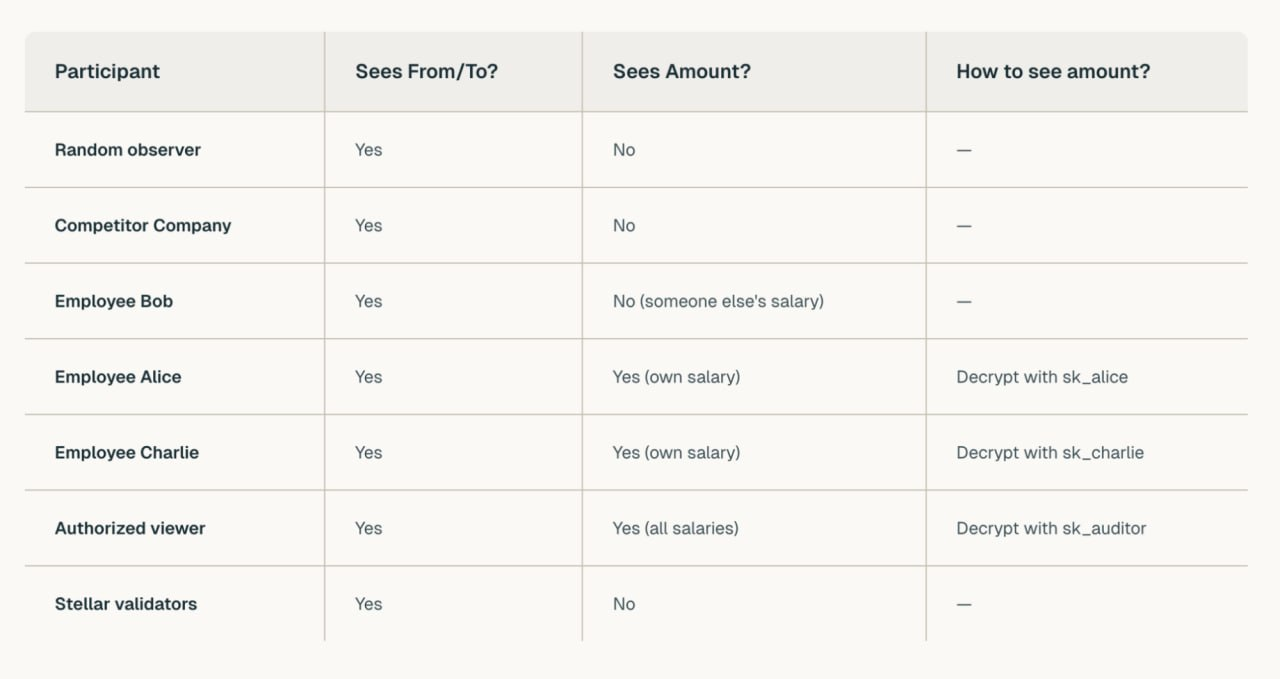

Let's consider a short scenario: “Company X pays salaries to employees Alice and Charlie. Bob also works at the company but isn't receiving payroll through Stellar this cycle.”

How it works:

Step 1: Company X deploys a confidential token smart contract on Stellar. Each payment amount is encrypted before sending, but can be decrypted by authorized viewers: a partner, an auditor, an internal team. These parties are defined at deployment.

Step 2: Payments flow through the contract. A ZK proof verifies each transaction: correct amounts, no double-spending without revealing the values. Alice's key decrypts her amount. Charlie's key decrypts his. The auditor's key unlocks both. Bob receives nothing this cycle. All amounts stay encrypted to outsiders.

Step 3: Alice sees her salary after decrypting with her private key. Charlie follows the same process. Neither can view the other's amount. Nothing is visible to anyone else.

Step 4: Authorized viewer: a partner, an auditor, an internal team can view the amounts. These parties are defined when the contract deploys, not granted arbitrarily after the fact. Company X determines the access structure from the start.

How this reflects the three-layer design:

-

The base layer stays public. Transactions remain visible to validators: signatures, fees, consensus rules - all verified by the network. At the application level, amounts stay encrypted. Blockchain security preserves auditability (who, when, validity) except for confidential amounts.

-

Privacy at the app level. No protocol changes required. No validator updates needed. The confidential token contract verifies proofs, updates balances, and emits public events. Encryption stays off-chain.

Each application implements its own privacy logic. The protocol and validators support privacy when users choose it, without having to change anything. For developers, deploying a Stellar smart contract is all building a confidential payment system takes. Protocol forks unnecessary, separate chains eliminated.

-

Disclosure is selective, not enforced. Sharing data with a designated party: an auditor, a partner, an internal team - requires access defined at deployment. Real-time access replaces manual reporting, but operational needs drive this decision. Not the protocol. Who sees what, and when? That power stays with the institution.

Confidential tokens hide amounts. However, participants remain visible. What about shielding them? How can we implement this?

Privacy Pools: shielding participants

The problem: Office A trades with Office B. Despite the confidentiality of the amounts involved, trading relationships stay exposed. Who trades with whom, and when. In the wrong hands, this information becomes dangerous. Front-running, poaching counterparties, copying strategies - all three benefit competitors.

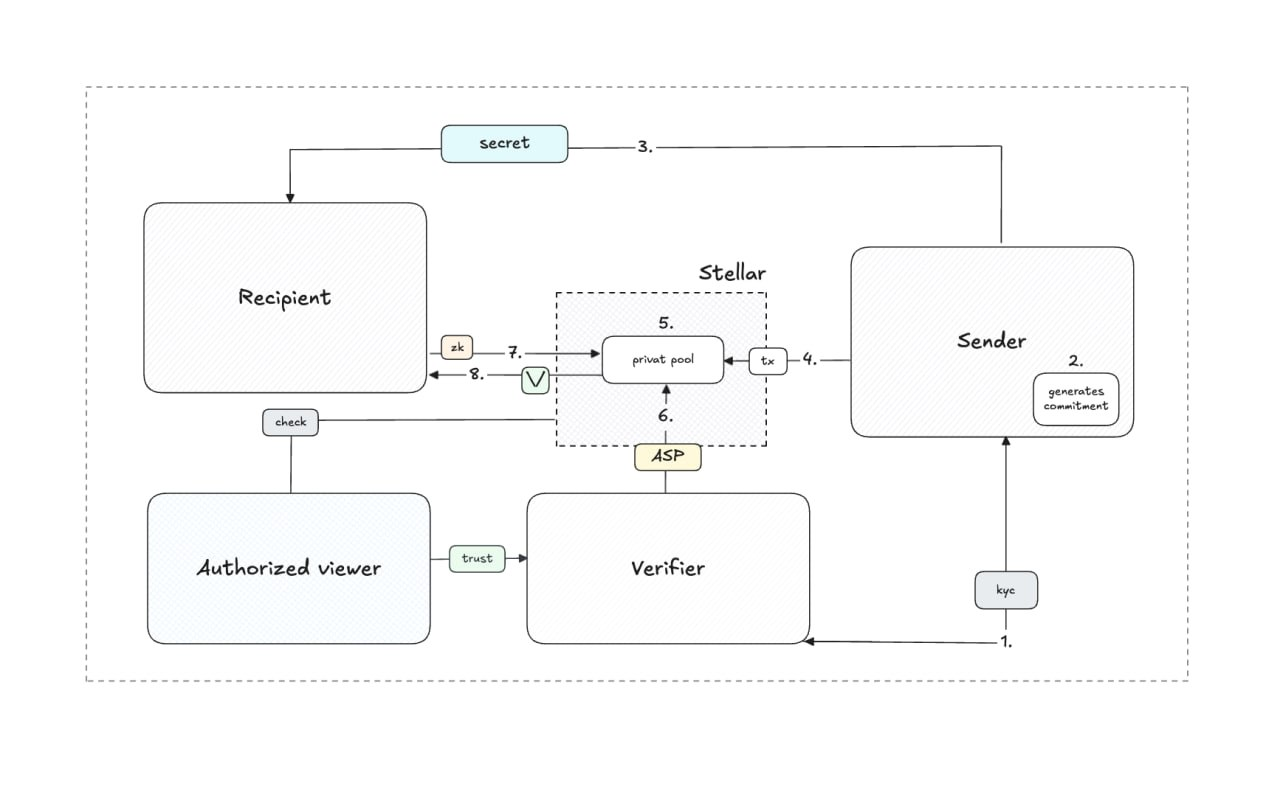

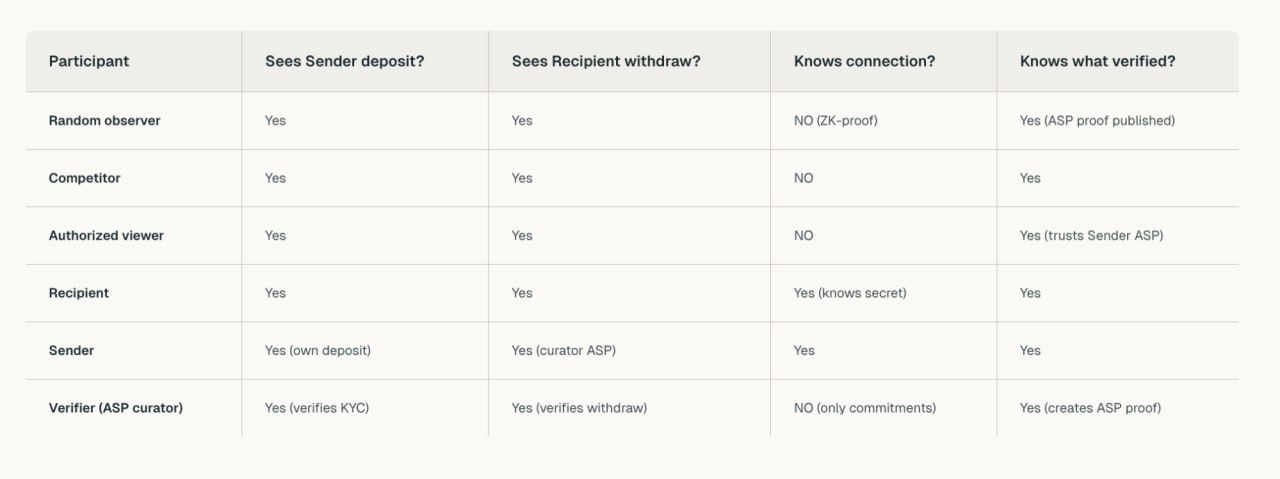

Between sender and recipient, the on-chain link - privacy pools on Stellar sever that link. A filtering mechanism strengthens these pools: an association set (a vetted group of eligible participants which defines who can enter the pool). Screened participants pool their transactions together, forming the anonymity group. The larger the group, the stronger the privacy.

How it works:

Step 1: The privacy pool smart contract deploys on Stellar. A third party deploys it and establishes an association set. The criteria are configurable: the deployer decides what qualifies a participant. Only members can deposit and withdraw. The set forms the anonymity group.

Step 2: The sender generates a commitment. A cryptographic hash combining a secret and a nullifier. The commitment goes on-chain, the secret and nullifier stay local. The nullifier serves a critical role: when the recipient later withdraws, its hash is published on-chain. The contract checks that this nullifier was never used before - this is what prevents double spending.

Step 3: Through an encrypted channel, the secret reaches the recipient.

Step 4: The merkle tree absorbs the commitment and updates the root.

Step 5: The verifier reviews the deposit and includes it in the association set and the commitment is now marked as verified.

Inside the pool, participants aren't idle. Once funds are deposited and verified, the privacy pool becomes an active environment. Participants can transact with each other - payments, settlements, yield strategies. All shielded from outside observation. The pool isn't just a deposit-and-withdraw mechanism. It's a private operating space where institutions interact without exposing their activity to the public ledger.

Step 6: When a participant is ready to exit, the recipient generates a ZK proof: "I know the secret behind a commitment that belongs to the association set without exposing the secret itself."

Step 7: The recipient calls to withdraw. The contract verifies all three: proof, merkle tree, association set before releasing funds.

Critical details:

It is also true for association sets that size matters. With only two participants, the verifier can deduce who transacted with whom. Genuine privacy demands a sufficient anonymity set.

The recipient's on-chain anonymity protects them. Pool participants have been confirmed by the Association Set Provider. Pool integrity remains verifiable without exposing individual transactions.

Association set proofs differ fundamentally from viewing keys. Instead these proofs function through selective verification. Users can share proofs of their ASP status with counterparties. Between sender and recipient, specific connections are invisible to all.

What can be revealed? To an authorized party, a user can share their ASP proof with a counterparty. Between sender and recipient, the connection remains untraceable. Cryptographic protection makes this link unbreakable. On-chain de-anonymization is impossible.

How the three layers work together:

-

Base layer: public verification. To validators, fund movements stay visible: deposits into the pool, withdrawals out, ZK proofs on-chain. Proof validity, nullifier reuse, signature correctness - all checked by the network. At the infrastructure level, transparent and auditable the network remains.

-

Privacy layer: hidden connections. The privacy pool contract stores deposit merkle trees, association sets, and consumed nullifiers. Without revealing who deposited, the ZK proof demonstrates withdrawal rights. To competitors, trading relationships remain invisible. For developers, a standard interface defines the privacy pool on the same smart contract infrastructure as any other Stellar application, association sets, merkle trees, and ZK verification all run.

-

Verification layer: Provable without exposure. Withdrawals demonstrate membership in a screened association set without identifying the specific depositor. Individual transactions stay hidden.

Different problems require different models. Amounts - confidential tokens protect them. Relationships - privacy pools shield them. The choice is determined by what you are protecting, and both solutions can be deployed on the same infrastructure.

Why Now

Right now two forces have converged simultaneously opening a window for institutional privacy on public blockchains:

-

Technology has matured and developer tooling has standardized: stable SDKs ship with Circom, Noir, and RISC Zero. The talent pool of ZK specialists continues to expand. No longer experimental, this infrastructure is production-ready.

-

Institutional demand has intensified. From pilots to production where asset tokenization is crossing that threshold, but no institution will broadcast: payroll, trading positions, or client flows on a transparent ledger. Privacy isn't optional at this scale. Without it, public blockchains lose the institutions they need most.

These forces create opportunity, but they don't determine who captures it. That hinges on timing and timing in privacy markets follows unusual rules.

Privacy migration dynamics: structural lock-in

From regular assets, privacy differs in one critical way. As Ali Yahya from a16z has observed, asset migration carries minimal friction where you just transfer tokens. Privacy migration is different: it carries substantial switching costs.

Here's why. Migration means surfacing - transactions become visible during the transition between environments. All accumulated privacy history vanishes and on-chain graph analysis becomes functional again. Also add the cost of changing infrastructure: redeploying contracts, re-auditing systems, re-integrating partners.

Fresh deployment carries minimal history where switching costs stay low, but long-term usage builds substantial privacy history, and migration grows structurally unprofitable. Each transaction deepens dependence on the current anonymity set.

Critical mass creates structural advantages

Once a platform reaches critical mass, structural barriers crystallize: established anonymity sets (thousands of participants), concentrated liquidity (no fragmentation), mature ecosystem (proven providers, audited contracts).

Specifically, privacy generates a structural moat through three mechanisms:

-

Migration friction intensifies with usage depth. The longer an institution operates within a privacy pool, the costlier switching to another platform becomes.

-

Anonymity sets expand with network size. New participants enhance the privacy of existing ones, a positive feedback loop that strengthens over time.

-

Liquidity concentrates. Institutions gravitate toward the chain with the deepest privacy liquidity, reinforcing the advantage with every new entrant.

Winner-take-most dynamics

Combined these three mechanisms produce winner-take-most dynamics.

The first platform to reach institutional critical mass establishes a position that competitors find increasingly difficult to displace - regardless of technical capabilities. Privacy isn't about superior technology. What matters is where the anonymity set and liquidity already reside.

Stellar already moves billions in payments. Now institutions and developers gain the ability to choose when to be public and when to be private - on a network that's already proven.

Transparent when you want it. Private when you need it. Finally, we have both.

REKT serves as a public platform for anonymous authors, we take no responsibility for the views or content hosted on REKT.

donate (ETH / ERC20): 0x3C5c2F4bCeC51a36494682f91Dbc6cA7c63B514C

disclaimer:

REKT is not responsible or liable in any manner for any Content posted on our Website or in connection with our Services, whether posted or caused by ANON Author of our Website, or by REKT. Although we provide rules for Anon Author conduct and postings, we do not control and are not responsible for what Anon Author post, transmit or share on our Website or Services, and are not responsible for any offensive, inappropriate, obscene, unlawful or otherwise objectionable content you may encounter on our Website or Services. REKT is not responsible for the conduct, whether online or offline, of any user of our Website or Services.